Rapiro UI by Bottle + HTML5

Rapiro UI by Bottle + HTML5

Version:20141206

Brief:

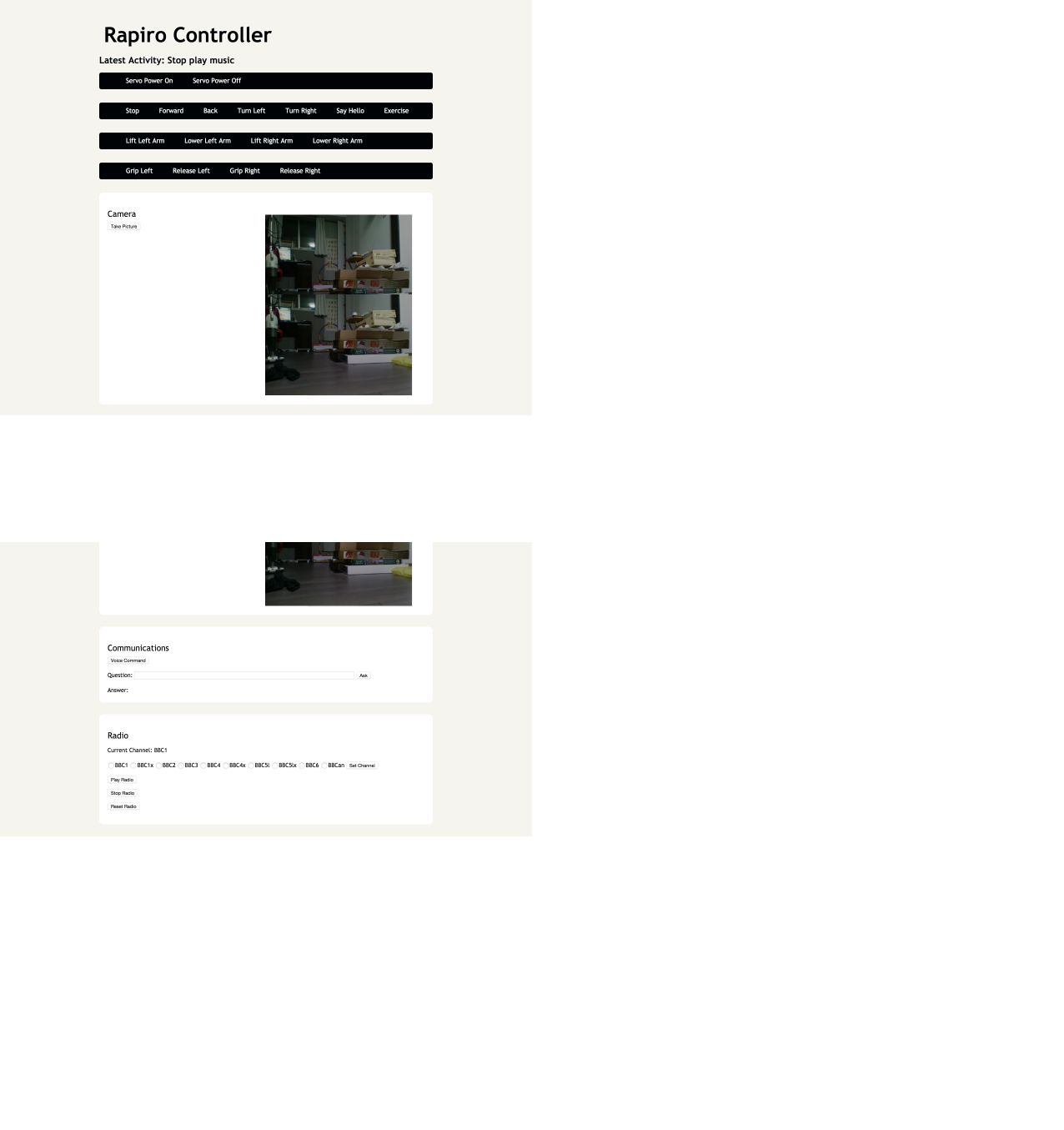

A bottle framework + HTML5 based user interface to control Rapiro. I tried NetIO and CGI for the same purpose before but found this to be a more up-to-date approach. It is actually good in response compared with CGI. Functions includes movement control (by button and voice), take picture and face recognition, query AI agents by typing or voice, play music from NAS and a BBC radio.

I have included all the script/resources in the pack, so it should work out of the box. Many of them however is coming from the authors on the reference list. So I’m actually concerned about the license issue. I used this ‘all in the box’ approach because I personally know well about the pain/time cost to collect various libraries and because I paid I don’t want others to repeat the process. Please contact me if any library/script included should not be distributed at this manner and I will exclude it.

Things to prepare:

* install Oga-san’s sketch to Rapiro board (see reference)

* applications need to be installed on the pi (by `sudo apt-get install *`)

*** mpg123

*** curl

* python environment with the following libraries installed/placed

*** serial

*** picamera (not used for now)

*** wolfram alpha (you need to register to wolfram and get an app id)

* audio environment ready

*** I’m using Wolfson audio card + 2 tiny speakers attached to its amplifier output

***** speakers from here (8ohm, 0.25w): https://www.switch-science.com/catalog/671/

***** as Wolfson card covers Raspberry pi’s GPIO completely, I customized its connector to leave the 6 pins for Rapiro connector, and soldered 6 jumper cables to connect pi with the audio card

*** For microphone, I’m using a webcam (BSW32KM03SV, case removed, glued to Rapiro’s camera hole) for camera and its microphone for recording.

Usage:

* Create a directory and put the whole contents in it

* Give execution permission to each .sh file

* execute ‘bbc_radio_update.sh’ to get radio url list (this can be done later by the reset button on the UI too)

* Open rapiro_server.py with a text editor, modify the following and save

*** rapiro_name: not used for now

*** wolfram_app_id: your Wolfram alpha ID

*** raspberry_pi_ip: your pi’s ip address

*** nas_music_dir: mounted path to your NAS music directory

*** use_face_recognition: True to use face recognition. server startup will take some extra time for the training of face recognizer

*** face_recognition_label_list: list of names of persons you want recognizer to identify. explained later

*** face_confidence_thresh: threshold value to eliminate false positive result. explained later

*** GOOGLE_SPEECH_URL_V2: put your google key in this line

* Start server by ‘python rapiro_server.py’

* Access ‘http://your_ip_address:10080’ by a browser from PC/Mac/Tablet/Phone etc

* Enjoy!

Usage:

*Movement:

*** Please use the self explanatory buttons

*Take picture:

*** The button will take a picture. If use_face_recognition is True, face detection and recognition will be processed and detected face will be indicated by a green rectangle. Preparation for recognition is explained later.

*Communication:

*** Voice Command button: Record voice and send to Google voice API (V2), then use the following list to decide response. Please mind that the Google API only allows 50 queries everyday. The following list can also be typed into the form to use.

*** Default: Query Wolfram Alpha and display/speak the answer

*** repeat ABC: Display and speak ABC

*** cleverbot ABC: Query Cleverbot with ‘ABC’

*** meow: Rapiro will meow

*** take picture: Take a picture. The same with the take picture button mentioned above.

*** play music ABC: Search the NAS music directory for a sub directory with ABC in its name (support partial match), collect all files inside and pass to mpg123 to play.

*** stop music: Stop playing music

*** hello, stop, exercise, forward, back, turn left, turn right etc: The same with the movement buttons

*Radio:

*** Self explanatory

*About face recognition

*** Face detection is done by haas-cascade, and recognition by eigen face. The recognition part needs some preparation. First create directories under faces with the name of each person (this should go into face_recognition_label_list). Then we need a set of more than 30 gray scale pictures of the person inside the dir. The picture’s size should be around 100x100. Face should occupy most area inside the picture. Some face angle variation (+-10 degrees perhaps) will increase performance. I will include a tool to automatically crop face from a set of pictures in the future.

Reference:

Bottle framework:

The official document

(The idea to use bottle as server framework is inspired by this blog: http://make-muda.weblike.jp/tag/rapiro/)

Wolfson audio card installation (followed the pdf attached to this thread):

http://www.element14.com/community/thread/31714/l/instructions-for-compiling-the-wolfson-audio-card-kernel-drivers-and-supported-use-cases

HTML5+CSS3 (followed this tutorial):

http://www.smashingmagazine.com/2009/08/04/designing-a-html-5-layout-from-scratch/

Rapiro arduino sketch (written by Oga-san):

https://github.com/oga00000001/RAPIRO/archive/b1368d6efa66485bb0f48916a013a517f28a9018.zip

BBC radio:

http://blog.scphillips.com/2014/05/bbc-radio-on-the-raspberry-pi/

Google text to speech API script:

http://danfountain.com/2013/03/raspberry-pi-text-to-speech/

Google voice to text API and obtaining the key to use

http://progfruits.wordpress.com/2014/05/31/using-google-speech-api-from-python/

Download link

https://app.box.com/s/1ih72fs37vf01pxp358d

This is awesome! I'm surprised no one has commented on this. Does all of this still work?

Read Terms of Service upon submitting. 投稿する前に利用規約をお読みください。